In the last 6 months, Esperion’s share price became 3.3X,

Can Bluejet and Neuland have revenue growth contributing to Esperion?

@phreakv6 ![]() curious what would be impact on Esperion ?

curious what would be impact on Esperion ?

This is going to be part rant, part insight, mostly on the topic of AI. I haven’t written here in awhile - I notice most of my recent posts start with this, so clearly my frequency of writing has come down drastically and there are few reasons for that as well.

AI has come a long way in the last few months where it has become very good at creating/managing small/medium size projects. I have personally been using claude code/codex to maintain phreakonomics and also build a handful of other tools for myself. I would highly recommend even non-technical people to give it a shot - if not claude code, at least codex that comes as part of chatgpt plus plan just to get a taste of things. Maybe you can start with building python notebooks for analysing data or some such small but useful endeavour that is bound to get you totally hooked for life (in a good way).

AI companies have been releasing browsers like comet and atlas but these come with significant downsides that you should be aware of. The fast/instant models that these default to are terrible, absolutely terrible at reasoning and to lower costs, they never do. As a consequence they mislead you consistently and unless you are cross-checking or working with someone else who double-checks your work or are working on something that verifies itself, is going to be very costly and dangerous in the long run.

The VP forum has been filled with AI posts or posts with likely inputs from AI to this effect where sometimes the information is patently false. While it is tempting to refute these with correct information - its a lot harder to do so. Essentially its easier to generate bullshit than it is refute it (brandolini’s law or the bullshit asymmetry principle). So in the long run this is going to lead to a scenario of race-to-the-bottom where people one up each other in generating more and more slop that discourage people who try to put out insights from hard work. This is already happening elsewhere on the internet where the slop crowds out genuine signal - its sad that its happening on VP too.

Like any genuinely useful technology with far reaching implications - its bound to be used for the good and bad - on the internet as well we had animated gifs and those annoying scrolling and blinking text before we had ecommerce. It says nothing about the technology itself but of how powerful tools in the hands of many can lead to outcomes as widely variable as the population itself.

That leads us to the AI buildout and the crazy $1 trillion dollars in deals. While its easy to shoot this down as crazy and bubble, there’s a reason these are structured the way they are. There isn’t enough capital in the system to pursue the buildout so instead of waiting for capital formation (which could take several years), companies are making deals with equity and sharing the risk and together betting big on AI. Companies have learnt not to go obsolete, having watched and learnt the stories of blockbuster and kodak - so these kind of deals are a way of diversifying risk.

But is it really diversifying risk? Imo, its concentrating it - earlier if a openai went bust, it would be just one company going bust - but with the deals they have made, they can take the entire ecosystem down with them. Imagine a scenario where 100s of billions of dollars of investment is done but models improve exponentially and the capacity built cannot justify the RoI for several years. This imo is somewhat unlikely because there is exponential demand where its doubling every few months as of now. Even if models become efficient, the demand will more than likely catch up so this risk is not existential.

However, the bet is not just on AI by US tech but on AI being centralised largely - in data centres. If China can build a decentralised AI that can run on phones/PCs and can steal large portion of this demand - similar to what Apple/PCs did to mainframes - it can be a sort of an existential risk perhaps and bigger than any war China can win over the West. This is somewhat likely but this sort of model architecture improvements can only come from people not actively invested in centralised AI.

The other interesting thing with all this is - all the capital generation in the world that concentrated it in the hands of few post GFC - mostly tech and finance companies is finally going to find its way into capital goods companies. As of now, despite the deals and capital arrangements, the final bottleneck is going to be capital goods. It would require a massive capex and even a company like TD Power with 30% RoCE and 2x capital turns and 100% reinvestment can only grow topline at 60% max from internal accruals - so there is going to be borrowing - a massive capital cycle because capital goods companies have got to grow at ridiculous pace to keep up with the investments because moving atoms into specific configurations requires more capital than it does to move bits.

Talking of atoms and bits - where does this leave intelligent beings like us in the long run? From the time we started talking of data centres in MW and GW, i realise we are talking of intelligence in terms of watts. AI is able to build code that would take 20 10x engineers 6 months in an hour or two at most. This efficient conversion of energy into intelligence is going to make us more and more redundant in things that require intelligence. Its not a coincidence that GLP-1 theme is running alongside the data center theme.

One thing I do see us holding fort on is on taste - its subjective and ones with good taste (I mean Jobs/Ive kind of taste) are going to be more valuable in the future perhaps. The other things AI lacks is agency and the ability to dream - so dream, move and get things done!

These are thoughts that have been in my mind over last several weeks/months and they are very disjointed but all in the topic of AI, so thought I will put it out in one post.

Lots begin written whether AI is in a bubble or not. I came across the Plain English Podcast by Derek Thompson. He discussed both sides of the issue with two different guests, which I find rare. People tend to get fixated on one side of any discussion. You may enjoy.

Everybody thinks AI is bubble. What if they are wrong

This is how the AI bubble could burst

’

There is demand, and opportunity to burn trillions, ROI is likely to be DUD because of competition and commoditzation. The risk can get existential for frontier labs like Open AI, Anthropic, I won’t be surprised if Uncle Sam ends up buying a stake in Open AI as slop hits the fan.

Open AI itself is trying to build a consumer device that can locally run GPT-5, stay tuned. This will be bad for hyperscalers in the short term (glut), in the long term - Jevon’s paradox.

Its just mimicking intelligence and reasoning, they’re dumb. LLMs in their current shape won’t get past Copilot phase, humans will still be needed.

“Overall, the models are not there,” Karpathy said on the podcast. “I feel like the industry is making too big of a jump and is trying to pretend like this is amazing, and it’s not. It’s slop.”

Possibly opposing thought - few philosophical musings by Geoffrey Hinton & David Deutsch in several podcasts:

AI is able to dream. But it is not in the sense that humans assess dream sequence. Analogous to age old quest of humans ‘wanting to fly like a bird’ - we do fly now, but just not like a bird - flapping wings etc. We have fixed mechanical wings based on alternative-adaptations of laws of physics than biological-adaptation. A bird would not approve of our flying style :). Another popular example maybe, however advertised, ‘Home meals ready’ by an external establishment, is not equivalent to my-home-meal (or my friends home meal).

So when LLMs throw out random connections, even though it may be clearly wrong from otherside of the screen i.e us, the machine has indeed “hallucinated”. Evaluation point of view needs to change to interpret a dream perhaps, which is subjective.

If we didnt want machine to hallucinate then we have to fix the algorithms to not-explore the incorrect measures.

We are now entering an era where the upcoming Q is ‘are LLMs in the grey zone of being sentient’.

They simply can modify data in complex and rigid way. They cannot modify./analyse other than what is in code. It is old like ATM machines replacing bank tellers, but both co-exist. Further improvement will be made as computing power gets further boost., but…

You do realize its a black box we’re dealing with right?

“fixing” requires making drastic changes in LLM architectural frameworks.

Was listening to Vishal Sikka earlier today talking about how LLMs generate fictional code libraries while generating code and bad actors are creating malicious codebases with those library names in Github causing people to download it and get hit with ransomware.

Love reading your thoughts but hard disagree here. Just writing some code is one thing. Production ready code and Software Engineering is a different ballgame together. And this statement is an extreme exaggeration on that front acc. to me.

You have every right to disagree. I don’t disagree with that. But, Phreak is not a regular member, like me.

My ability ends with titles and paragraphs; his, with books and concepts.

His library.

We had a new project in our company

A customer wanted a proof of concept to read bank statements irrespective of which back they were from

I used Claude to build one based on react, fastify and python pdf plumber which was entirely written by Claude however I did at any one time only a small function. I then used haproxy to host it with pgsql as backend in about a day. Mind you other than Python my experience with everything else is zero

The programmer who also tackled the same problem used Django and took double the time and had to still use my Python code to read statements

My Python code was also built by Claude however he also tried with Claude and couldn’t get the same accuracy

The point I’m trying to made is that in the future as it has always been in the past, the people who can best use the tools are generally more productive

Ai at the moment is just a tool but it’s a powerful one.

When road lamps were lit by oil in London there was a guy responsible for lighting all of them. His job was replaced by the electric switch

But ai is scary as it does and will replace a lot of things. I frequently use it for understanding legal documents, automating processes that used to take hours to instant.

It’s going to get worse because at one end there is over investment and another end it’s replacing employment

There is already a robot slave being offered for sale: NEO Home Robot and with today’s Tesla vote giving musk 1trn package, it will become powerful very soon as he pours research into the project

The first iPhone only did 3g and didn’t do emails as well as blackberry

Technology only needs a start, the iterations iron out the inefficiencies more quickly than we can blink

May be nature of job of each individual will be elevated and with more efficiencies more opportunities will emerge.. With the arrival of Computer and internet the productivity and the nature of job being done by individual has improved multifold times and a similar new revolution may come..

AI is a powerful tool and will make the person handling it more powerful.

Just to be clear, I agree with huge productivity gains. I just don’t agree with the magnitude of it being portrayed above. Especially with this statement - “AI is able to build code that would take 20 10x engineers 6 months in an hour or two at most.”. Also, the gains for Rapid prototyping are far more than real SWEing(though it is also hugely significant).

Machine learning models will be a classical example of Compounding in motion. The abilities that seem highly exaggerated currently will probably be taken for granted a year down the line. The point stays - the definitions and the utility of intelligence itself is changing- far reaching consequences are certain including in education, testing, coding, and anything else with a sizeable available data.

I think the jobs in the future will be based on who can get the right code out of the ai in the shortest possible time

I was speaking to someone at a hedge fund

Hedge funds are constantly evolving as market conditions change and they attract some of the best minds because they have a huge pool to choose from as many graduates apply for internships and roles there

When they develop a system with ai, the next question to an ai is “what could they have done or asked differently that would have helped the ai to get the answer faster”

I’ve been coding with ai for automation and we had one colleague who left that we didn’t replace and another was temporary for whom we didn’t renew their employment contract. I am more or less jobless and my only other colleagues is scared her job is getting reduced as she doesn’t have anything to do all day. This is from the time when atleast 3 of them were really busy all day!

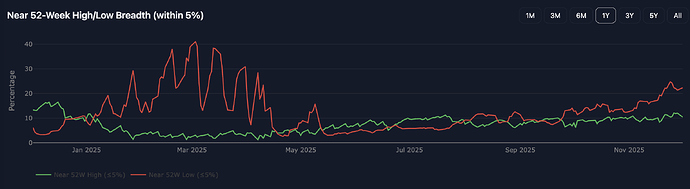

Markets have been sideways but there’s been a lot of noise on poor breadth. I have been analysing this over the past couple of months with various tools and here am presenting my findings. Some are obvious but some are not.

The most common way to look at breadth is stocks above 200dma. It shows a bit of weakness but there’s nothing glaringly off here.

Number of stocks hitting 52-week highs/lows. There’s a bit of pain seen here with ~12% of stocks hitting 52wk lows here at peak (Nov 25th).

A slightly better way to visualise previous chart would be to look within 5% of 52wk highs and 5% of 52wk lows which would be a bit more broad including short-term pullbacks. Here is the pain is more apparent with 20%+ stocks hitting 52w lows.

But what makes this odd is largecaps are living in a world of their own. The 52wk lows now vs Jan-Feb shows how diff it is. The number of stocks hitting 52wk highs is a whopping 44% and is the highest it has been this year! Nifty50 has 22/50 stocks near 52wk high and only 3 near 52wk lows (ITC, Trent, Tata motors). In a way we are in a market similar to what we had in 2018 where similar divergence was seen.

This divergence started picking steam last month and I suspect its going to get stronger from here.

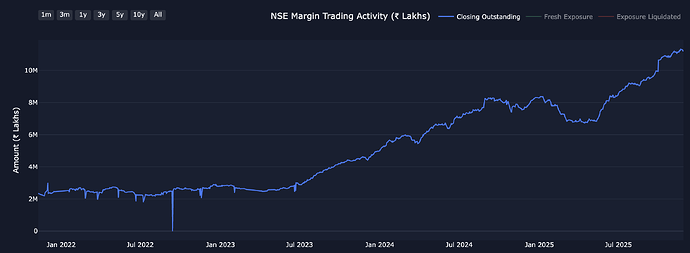

There is one more data point that I have been following since 2019 which shows (a part of the) leverage in the system (Margin funded). I have seen this number grow from Rs.12k Cr pre-Covid to current Rs.1.12 lakh Cr (10x inc)

This by itself is not a concern and is in proportion to growth in overall market and also efforts made by SEBI to deepen the cash market. In fact they are still talking of deepening this further. But the key issue is where this money has been going. This is where things get interesting.

This is a margin split by market cap. From around Jan '22 to Nov '25, Large and Midcaps have seen about ~4x inc while Small/Micros have seen ~7x inc. So much so that smallcap leverage today is higher than even largecap leverage today (My defn of micro - 1k-5k and small 5k-25k and midcap 25k-100k and large 100k+). We know how illiquid these categories and how far this much inc. in leverage goes towards generating returns.

Maybe reducing expiry volumes has pushed a lot of these guys into trading small/micros on margin as well (big bump has been since May '25 at 68k Cr to 112k Cr in Nov '25 and this is where the divergence towards small/micros picked up even more steam). Whatever the reason may be, the risk has been brewing here for some time.

The illiquid bit shows up clearly in this where the margin is divided by median turnover in stock over last 90 days (rolling). This used to be 1x or lower across market cap categories from the time i started following it but today small/micros at the median have ~3.5x margin as compared to the turnover in the stock. Some have 5-10x or even more (Reason why PEAD stopped working as well as it did 2 yrs back.)

This of course cuts both ways - so far we have seen a gradual elevations in how much we are willing to pay in terms of valuations for these stocks. Compared to pre-covid, today we are paying similar valuations to large/midcaps but to small/micro, the premium has gone up 50-60% (15-20P/E has today inflated to 25-30 P/E roughly)

The other interesting thing is despite good flows - from FII/DII net flows, MF flows, PMS flows, MTF flows which have all been very good this year - the market has just been held up sideways that its difficult to imagine what happens if they were to contract.

But I suspect that won’t happen - this is by no means a bearish post on the whole market. There are a lot of Banks (PSU banks like MAHABANK, Bank of Baroda etc - some of which have stellar RoA, NPA ratios in decades but are valued like this is the cycle top, which it very well might be), Auto ancillaries (fiem, lumax, sansera), Hospitals, Capital goods (like cummins, td power) that are setting up well with good charts/valuations and great earnings growth. But we are also in a tough macro environement where nominal GDP growth (Actual measured growth) is poor at 8.7% which means tax collections etc. for the govt are going to be weak (GST collections with the recent cuts as well puts pressure - as per the recent Nov print as well) - It will be hard for this govt. to continue capex as it has done in the past unless it cuts down on freebies.

All these point to a tough few quarters ahead, esp for small/micros due to valuations and liquidity issues.

Disc: I have been reducing exposure to the market and raising cash levels. Every time I have done so in the past, something fundamental has changed within weeks and I have had to change my mind, so I remain flexible to do the same.

Inflation has moderated to 0.5% which i have never seen in last 10-15 years. No point for RBI to further cut rates due to USD INR already hitting 90 levels. GST collections are near to flat for Nov month which will disturb fiscal math for Govt. Upcoming budget might focus on PPP model for Infra/defence/railways as private industries are sitting at one of the best cash levels. Great chance of budget cut in Railways/Defence/Infra and just touching some crucial pain points. IIP has dropped to 0.4% which is showing state of manufacturing, some effects from 50% tariffs as well. Time to take sector based calls is over now, very few bets from hated sectors can survive the onslaught. Pure skill based game.

All the components of GDP – Private consumption, Government consumption, Gross capital formation, Net exports – grew slower than 8%! So what has driven this growth is ‘statistical discrepancies’, which swung from a small negative number to a large positive number. Statistical discrepancies is the factor used to align the GDP estimate as per the expenditure approach and the supply side (or sectoral) approach of estimating economic growth. Excluding discrepancies (admittedly not the right metric, but still) GDP growth for the quarter was just 4%.

Src: india data hub

India’s economy is reportedly growing at an impressive 8.2* %, yet the IMF has assigned its national accounts a ‘C’ grade due to methodological weakness. They have concerns over outdated base years, price deflation and data granularity. This might be one time inflator due to GST cuts but let’s see how they will report in upcoming quarters.

That is only one of the four parameters …the actual grade stays B for india. It was in the same report .Also, whatever has been the issue it was there last quarter and last year as well . To me it looks like selective nitpicking to find negatives anyhow.