I was searching for AI plays in India and found following blog post by SOIC (@Worldlywiseinvestors) Daily Investor's Edge - Indian Proxies to AI Boom

Intellect caught my attention due to the bold claims made by its management. I will not cover Intellect’s core business, as it is a well discovered company and there is extensive information already available on its BFSI technology offerings. What I find more compelling is the Purple Fabric optionality, which I believe is being discounted by the market. This appears to stem from management’s inability to clearly articulate and position the offering, or from marketing it in a manner that invites skepticism about the legitimacy of the claims being made.

To understand Purple Fabric, it is necessary to distinguish between two distinct AI value chains: Generative AI and Enterprise AI.

Generative AI (GenAI):

- ChatGpt, Gemini, Claude

- General-purpose intelligence that generates insights and content, operating outside core enterprise systems.

- Optimized for reasoning, summarization, search, and interaction with structured & unstructured data.

- Produces probabilistic outputs and cannot enforce policy, compliance, or decision ownership.

Enterprise AI:

- Purple Fabric, Palantir’s Foundary, C3.ai

- Outcome-driven intelligence embedded directly inside mission-critical workflows.

- Optimized for executing specific business decisions, not open-ended reasoning.

- Enforces business rules, regulatory controls, and full auditability, owning the decision end to end.

For a regulated entity like a Tier 1 Global Bank, GenAI is insufficient. A general purpose model like GPT-5, while linguistically capable, lacks contextual awareness of the bank’s proprietary policies, auditability required by regulators (e.g. the FCA or OCC), and connectivity to legacy systems. It operates probabilistically, which is dangerous in a deterministic domain like transaction processing or credit risk assessment.

Intellect’s management has positioned Purple Fabric as one of the leaders in Enterprise AI, claiming to be one of only three significant global players alongside Palantir and C3.ai (reportedly backed by an independent analyst study).

Purple Fabric

As per the management, it is not a monolithic (one giant) software tool but a composable ecosystem (collection of separate, ready to use parts) designed to operationalise intelligence. It is built on a zero waste architecture philosophy, ensuring that every component serves a distinct, non-redundant purpose in the cognitive supply chain.

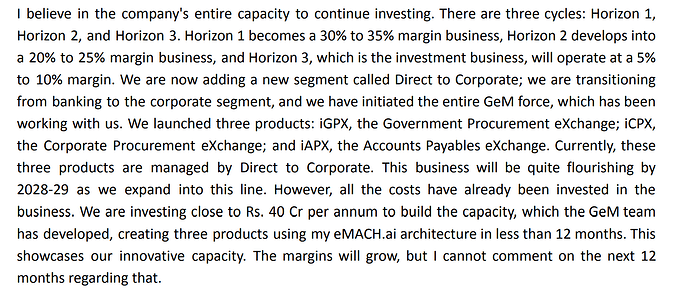

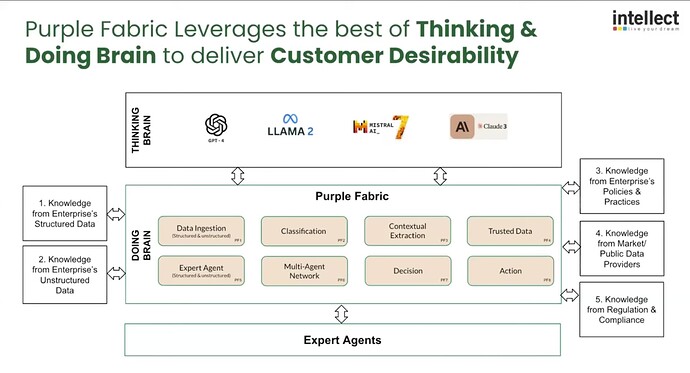

The platform is structured around 4 integrated stacks:

-

Enterprise Knowledge Garden (EKG)

- GenAI works by predicting the next likely word based on probability. In banking, “likely” isn’t good enough; you need “exact.” If you ask a standard AI about a risk policy, it might accidentally merge three different outdated documents because they contain similar keywords, leading to a “hallucination”. That’s where EKG comes in. Think of EKG not as a search engine, but as a curated library with a digital map.

- Instead of just dumping PDFs into a pile, the EKG ingests messy data: databases (SQL), legal docs (PDFs), and emails and organizes them into a Knowledge Graph. This is a structured web that explicitly links concepts. It doesn’t just find the words “Counterparty Risk”; it understands the relationship that Risk Policy A applies specifically to Derivative Type B, but not to Loan Type C.

- It uses MongoDB Atlas as a backbone to store vector embeddings alongside rich metadata. What that means is the system converts human text into long lists of numbers called vectors. These numbers represent the meaning of the text. This allows the AI to understand that a query for “money laundering checks” matches a document about “Anti money laundering protocols,” even if the keywords don’t match exactly.

- As a result, when an AI agent asks, “What is the policy for underwriting?”, the EKG does not generate a creative answer. It acts as a retrieval engine that pulls the exact, authorized version of the policy from the database. It ensures the AI is reciting the actual rulebook, not just making a probabilistic guess.

-

Enterprise Digital Experts (EDE)

- Think of EDE not as generic chatbots / agents, but as specialized digital employees hired for specific banking jobs. Instead of a GenAI that tries to know everything, these are pre-configured software agents acting as a “Credit Officer”, “Claims Adjuster”, “Complaints Manager”, etc.

- They possess persistent memory (statefulness) which allows them to maintain the “context” of long-running transactions across multiple sessions.

- These experts have API access to the core banking layer (eMACH.ai). Meaning they can pull a customer’s balance, update a risk score, or flag a transaction in the database autonomously.

-

Enterprise Governance (PF Govern)

- Think of PF Govern as the “Compliance Officer” built inside the software. In banking, you cannot just let an AI loose; it must follow the same strict laws and safety rules that human employees do. This system ensures the AI never breaks the law or leaks secrets.

- For BFSI clients, governance is not a feature; it is a license to operate. PF Govern embeds 18+ specific AI guardrails, including PII redaction, toxicity filtering, and bias detection. It enforces strict entitlements, ensuring that an AI agent cannot access data that the human user invoking it is not authorized to see.

- Every decision made by a Digital Expert is logged, traceable back to the specific source document and the specific reasoning logic used. This “explainability” is critical for compliance with regulations like the EU AI Act and US banking standards.

-

LLM Optimization Hub (MOH)

- Relying on a single LLM (e.g. OpenAI’s GPT-4) exposes the enterprise to pricing volatility and vendor risk. Purple Fabric is LLM-agnostic.

- The Optimization Hub acts as a router, benchmarking various models (GPT-4, Claude, Llama 3, Mistral) against specific tasks based on three constraints: Speed, Cost, and Accuracy. A simple extraction task might be routed to a low-cost, high-speed model (like Llama-3-8B), while a complex legal reasoning task is routed to a frontier model (like GPT-4). This dynamic arbitrage ensures the most cost-effective execution of cognitive tasks.

Beneath these high-level stacks lie eight specific technologies developed over a decade of R&D (representing ~20 million engineering hours as per the management). These technologies form the operational sequence of the platform which is carried out in 2 phases.

Phase 1: The Document Intelligence Management System (DIMS)

- Ingestion Technology: This layer manages the aggregation of data from three streams:

- Structured Data (Core Banking, ERPs).

- Documents (PDFs, Images) and Web Crawls.

- Paid Third-Party Feeds (Dun & Bradstreet).

- Classification Technology: Uses AI to sort & classify data (Distinguishing a Tax Form from a Legal Notice).

- Extraction Technology: A context-aware extraction engine that pulls semantic meaning from documents. It doesn’t just read text; it understands fields in the context of the specific business domain (Extracting Expiry Date from a trade instrument).

- Trusted Data Technology: A validation layer that prevents AI hallucinations by cross-referencing extracted data against verified internal (possibly external sources?) to verify accuracy before it enters the banking system.

Phase 2: The Cognitive Orchestration Layer

Once the data is refined by DIMS, the next four technologies drive the decision making.

- Digital Expert Creation: The framework for instantiating the digital personas mentioned earlier.

- Operation Room Simulation: A virtual runtime environment where multiple Digital Experts coexist and collaborate on a problem.

- Socrates Dialogue Algorithm: Intellect’s fancy name for a Multi-Agent Debate system. Instead of asking one AI to give an answer (which might be wrong or “hallucinated”), the system assigns different AI “personas” to argue with each other before presenting the final result to the user.

- Example: Agent A makes a proposal (“Approve this loan”). Agent B (The Skeptic) reviews Agent A’s reasoning and attacks it (But the collateral value is outdated). Agent C (The Mediator) synthesizes the points. They continue this back-and-forth “dialogue” until they resolve contradictions and agree on a final, fact-checked answer.

- Recommendation & Traceability: The final output is not just a decision but a Recommendation File. This artifact contains the full lineage of the decision data sources, agent dialogue, and logic path and is ready for human review and regulatory audit if needed.

Demo of Purple Fabric: https://youtu.be/QK27658JXIU?t=1890

Now let’s tackle the bold claims being made by the management.

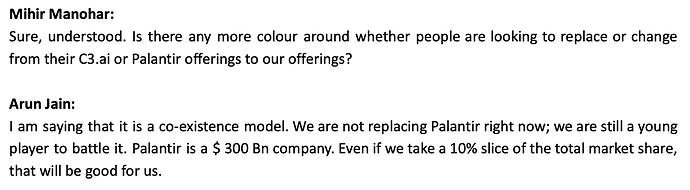

Intellect has positioned Purple Fabric in an elite bracket, claiming parity with Palantir and C3.ai as the only three viable Enterprise AI platforms globally.

What differentiates Purple Fabric from Palantir & C3 AI?

When asked in Q1 FY26 Conference call, this is what management replied:

Claim 1: Intellect is the "first to trademark ‘Enterprise Knowledge Garden’

Findings:

- Found following trademark raised by Intellect which is approved https://www.trademarkia.in/enterprise-knowledge-garden-6875024

- Does it matter? No. It does not protect the underlying technology, code, or method of “vectorizing knowledge.” It simply gives Intellect the exclusive right to use the specific phrase ‘Enterprise Knowledge Garden’ when selling financial services.

Claim 2: Concept of vectorizing knowledge alongside a data lake is unique and not provided by Palantir or C3.ai

Findings:

- Palantir’s core technology, the Ontology, performs this exact function. It connects structured data (Data Lake) with unstructured logic and semantics. With Palantir AIP, they explicitly integrate Vector Databases and Embeddings to allow LLMs to query the Ontology. Palantir’s architecture (Ontology + AIP) is functionally identical to, probably more mature than, Intellect’s “EKG + Data Lake” model.

- C3.ai uses a “Type System” and has a “Unified Knowledge Source” architecture that specifically combines structured enterprise data with unstructured data using retrieval-augmented generation (RAG).

- To conclude, Intellect is using EKG as a branding differentiator for a standard GenAI architecture (RAG + Semantic Layer) that competitors already possess under different names (Ontology, Type System).

Claim 3: Intellect uses ‘Experts’ while Palantir / C3.ai focus on ‘Agents’

Findings:

- This is a philosophical distinction, not a technical one. In AI, an “Agent” is simply a system capable of autonomous reasoning and tool use.

- Intellect defines ‘Experts’ as pre-configured agents with specific banking personas (e.g. “Underwriting Expert”). This is a valid go-to-market strategy (selling a “worker” rather than a “tool”), but technically, these are still AI Agents.

- Palantir AIP Agents are used for complex “Anti-Money Laundering” investigations, “Defense Logistics,” and “Hospital Operations”, tasks far more complex than what Intellect’s “Experts” claim to perform.

- Intellect is rebranding specialized agents as “Experts” to appeal to risk-averse bankers. There is no evidence that Palantir’s agents are incapable of complex finance; in fact, Palantir serves the most complex institution in the world (US Army) using these agents.

Claim 4: Intellect offers open technology / LLM agnosticism and benchmarking, which is not offered by other players.

Findings:

- Palantir AIP is fundamentally model agnostic. Their architecture allows customers to “Bring Your Own Model” (BYOM). They support OpenAI, Anthropic (Claude), Google (Gemini), Meta (Llama), and custom open-source models. Furthermore, Palantir provides a tool called “AIP Evals” specifically designed to benchmark and evaluate the performance of different models against specific tasks, exactly what Intellect claims is missing.

- C3.ai explicitly markets its “C3 Generative AI” as an LLM-agnostic architecture that prevents vendor lock-in.

- Intellect’s feature (routing tasks to cheaper models like Llama-3 for simple queries and GPT-4 for complex ones) is a valuable feature called “Model Routing.” However, it is not unique. Palantir AIP Logic and open-source frameworks (like LangChain) allow developers to build similar routing logic.

Does that confirm Intellect doesn’t have any differentiation from Palantir & C3.ai?

Not really. They have some differentiation based on my research which management hasn’t addressed.

-

The biggest differentiator is that Intellect sells a finished “product” for bankers, while Palantir sells a “toolkit” for engineers.

- Palantir / C3.ai sell a blank canvas. Their AI is powerful but “ignorant” of banking. A bank must spend months teaching the system what “Compliance” or a “Letter of Credit” is by building custom logic and ontologies from scratch.

- Intellect’s “Experts” come pre-educated. Because Intellect has been in banking for decades, their agents already know the specific rules, regulatory codes, and financial ratios used in underwriting or wealth management. The logic is hard-coded out of the box.

- Palantir’s Customers are Global Giants (e.g. JP Morgan). These banks have massive IT budgets and armies of data scientists. They want a blank canvas (Palantir) so they can build a proprietary, custom system that gives them an edge over competitors.

- Intellect’s Customer are banks that do not have 500 data scientists to configure Palantir. They need a “Plug-and-Play” solution that works immediately. They are buying the banking outcomes, not the AI infrastructure.

- The “Black Book”: Intellect has mapped banking to 386 microservices, ~750 events, and 2,000+ APIs to create a finite architecture. Management claims a 2-3 year competitive moat due to their “Black Book” of deep banking domain knowledge.

-

The Context Advantage

- Palantir sees data as objects. It treats a loan application and a tank maintenance log as the same thing, just data points to be linked.

- Intellect sees data as “Banking Transactions.” Because they own the core banking system, their AI understands the context of the data natively. It knows that a sudden spike in a certain type of transaction is a specific type of fraud risk without needing to be told.

-

High Switching Costs: Intellect pursues “Destiny Deals” ( large multi-year deals) that span multiple product lines (e.g. Core Banking + Payments + AI). Once Purple Fabric is integrated as the decisioning layer across these critical systems, displacing it becomes operationally perilous. The platform becomes the “institutional memory” of the bank, thus increasing stickiness.

Some additional points of interest:

- Europe-First Strategy: By deploying Purple Fabric in Europe first (the world’s strictest regulatory environment for GDPR, Data Residency, and Ethical AI), they have already cleared the highest hurdle. When Intellect pitches to Indian or Asian banks, they aren’t selling a beta product; they are selling a system certified for European Sovereign Wealth Funds. A new entrant / startup cannot replicate this compliance heritage without years of audits and legal certifications.

- Management claims Purple Fabric is being used in the UK by one of the largest wealth management companies & one of the largest Norwegian Sovereign Funds.

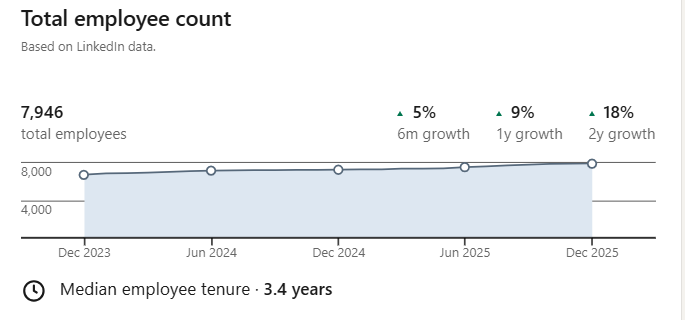

- Intellect has 1200+ research engineers. It’s not a small feat to replicate. This is particularly relevant for the West where the cost of a research engineer is quite high.

- Intellect has a cash balance of ~976 Cr and is effectively debt-free. They can aggressively use this for R&D & marketing and stay ahead of the curve in India.

- Institutional Trust & Stickiness. Because they are already running critical systems (Core Banking / Insurance) for these clients, they are a trusted vendor. A Chief Risk Officer will not let an unknown startup’s AI handle “Credit Memos” or “Underwriting Decisions” due to the high cost of failure.

Comparison Table: Purple Fabric vs. Palantir vs. C3.ai (Generated using Gemini Deep Research)

| Feature |

Purple Fabric (Intellect) |

Palantir (Foundry) |

C3.ai |

| Primary Domain |

BFSI Specialist (Banking, Insurance, Wealth). Built on 30 years of banking IP. |

Generalist / Defense. Origins in intelligence, expanded to corporate. |

Industrial / IoT. Strong in energy, manufacturing, and predictive maintenance. |

| Core Pivot |

Application Lifecycle. AI embedded into banking workflows. |

Data Ontology. Creating a semantic layer over disparate data. |

Model Lifecycle. Enterprise AI suite for deploying models at scale. |

| User Experience |

Low-Code / Business User.“Simpler to use”.1 Designed for bankers. |

Engineering Heavy. High complexity; often requires Forward Deployed Engineers. |

Data Science Heavy. geared towards technical teams. |

| Agentic Approach |

Digital Experts & Socrates Dialogue. Structured multi-agent debate. |

AIP (Artificial Intelligence Platform). Focus on connecting LLMs to data ontology. |

C3 Generative AI. Enterprise search and domain-specific co-pilots. |

| Financial Model |

Bootstrap / Value Pricing. Funded from balance sheet ($15M/yr R&D). |

VC / IPO Funded. Massive capital burn to drive adoption. |

VC / IPO Funded. High customer acquisition costs. |

| Regulatory Fit |

High. Built with specific banking guardrails (DIMS, Traceability). |

Medium/High. Strong governance but requires heavy configuration for banking. |

Medium. Strong on industrial standards, less native to banking compliance. |

Whatever I have explained above involves a lot of buzzwords and some prior technical knowledge of systems and AI. Let me summarise the same with an analogy.

Purple Fabric = Red Bull Formula 1 Lego Set

- The F1 Lego set comes with an instruction manual on how to assemble it. Similarly Purple Fabric comes with an instruction manual which they call a “Black Book”. It is a definitive manual of 386 microservices, ~750 events, and 2,000+ APIs to create a finite architecture.

- In the above Lego set, some parts (like wheels) come pre-molded. Similarly, Purple Fabric comes with pre-built “Digital Experts” (e.g. Complaints Manager). You don’t build the wheels or the complaints manager; you just snap them on the right place.

- As a result you get a working Formula 1 car (core banking) very quickly. However, if you suddenly decide you wanted to build a spaceship instead, you can’t because you don’t have the parts.

Palantir’s Foundry = Giant Bin of Mixed Lego Blocks

- Palantir gives you a massive, high-quality baseplate called the “Ontology,” but it’s blank. It doesn’t tell you “put brick A here.” You must define the rules: Does a blue brick represent a Customer, a Tank, or a Patient? You have to write the manual as you build.

- Because the blocks aren’t pre-molded into an engine or a wheel, you can build anything from a Formula 1 car (Core Banking), a Tank (Defense), or a Hospital (Healthcare).

- Building a Formula 1 car without a manual is incredibly difficult. This is why Palantir relies on “Forward Deployed Engineers”; expensive “Master Builders” who fly to your office to help you find the right bricks and assemble them.

Valuations

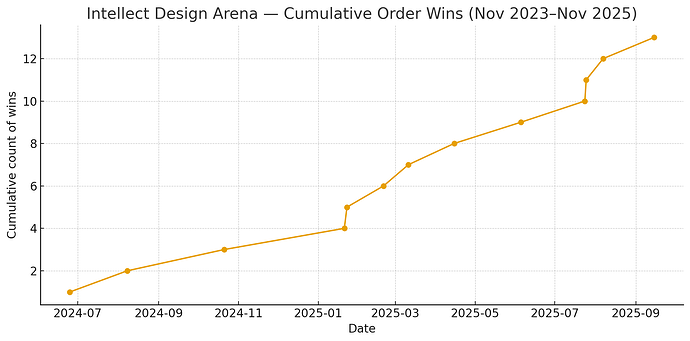

- Guidance of ~200 Cr in Purple Fabric revenue in FY26.

- Management believes Purple Fabric can be a 1000 to 5000 Cr revenue business. They are confident of 1000 Cr in 4 years and are working on plans to take it to 5000 Cr.

- In FY25, they had guided to take margins from 20% to 25% in FY26. However they have accelerated investments in Purple Fabric so don’t they they will be able to achieve 25% margin.

- Management is confident of growing at 20% CAGR for next 4 - 5 years with PAT growth of 30 - 40% due to operating leverage. They are confident of increasing margins by 200 to 300 basis points for next 3 - 5 years.

- At 35X TTM, we are buying a business that can grow bottom line at 35% CAGR for the next 4-5 years with the optionality of how well management can scale and sell Purple Fabric.

- Best Case: Purple Fabric becomes a 5000+ Cr business in 4 years with 30 - 40% margin.

- Worst Case: They are not able to scale Purple Fabric beyond 1000 Cr and use it as an add-on to win more destiny deals.

Disclaimer: Invested & Biased.